Ten things dentists should look for when evaluating on-page SEO

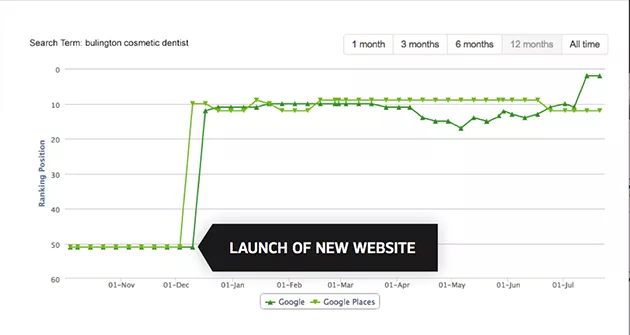

On-page Search Engine Optimization (SEO) is the most important factor for ranking well in search engine results pages. If you don’t have good on-page results, the rest of your SEO efforts will only have a limited effect. Your website design company should have done this already. But if not . . . audit your own site to know if it has been built properly.

On-page SEO considers how your website is built and the content on the site. Evaluating your on-page SEO may be difficult because many indicators of good search engine optimization are below the surface. Here are ten factors to consider:

1. Keywords – Set your website up for success by targeting each page to a specific keyword or keyword niche. Search engines scan your site to determine the general theme of your website, so it’s essential that your keywords show up in your copy (writing).

Read through the copy on your site. Each page should be optimized for a few keywords or a keyword niche that can be easily identified when you read it. For example, your cosmetic dentistry services page might be optimized for “cosmetic dentistry your location.” Your copy should include the keywords you’re targeting and have a minimum of 200 words. If you don’t have 200 words for the page’s subject, consider merging a few pages into one.

2. Fully-readable and structured text – Your website should be organized to make it easier for the search engines to scan. All text on your site should be readable by search engines, with no text appearing as an image. To check if the text you see on the page is readable by search engines, try highlighting the text. If you can’t highlight it, then the text is probably part of an image.

Semantic html and schema are ways to structure your text to make sure the most important information is highlighted for Google. H1 tags or header tags generally tell the search engines what your web page is about. H2, H3, etc. are used as sub-headers, and paragraph tags < p > indicate regular text. To see if this has been done on your website, right-click on the website to view the source code, then search for < h1 > tags to see what shows up. The most important title on the page should use the H1 tag, the second most important title should use the H2 tag and so on.

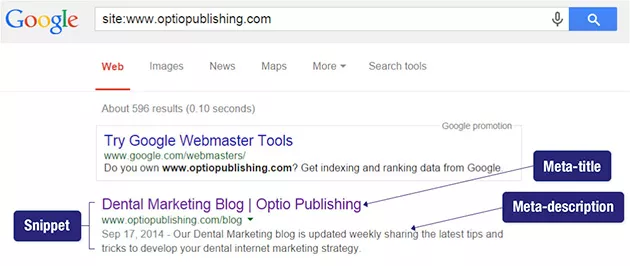

3. Meta-titles & descriptions – These are titles and descriptions for your webpage itself. Not only do they tell Google what your page is about, they also appear when a patient searches for your page. This is called a “snippet.” You can find your page title quickly – just hover over the tab along the top of your web browser to see what comes up. If all the pages on your website contain only your practice name, then you know that the titles have not been included.

Another way to discover if your titles and meta-descriptions are in place is to do a site: search in Google. Just type “site:" and your website URL into Google with no spaces. The results will display your titles and meta descriptions. If there’s no difference in text between the first paragraph of the page and the meta-description, then it’s likely they also weren’t filled in.

4. Location information – Having your physical address on every page of your website is important for local search results. You may not be able to work your location-based keywords into your copy naturally, so having location information somewhere on the page can be critical. We generally put the address in the footer section of the website. This also means that no matter where your potential patient is on your website, they can get your contact information quickly and easily.

5. Security certificate – A secure site is now standard for any website. A security certificate changes your site url from http to https and ensures that when people are visiting your site they don’t see a message that says “not secure” in the navigation. Not only that, having a secure site can help your site rank higher.

6. No duplicate content – Duplicate content is a concern for small businesses. At worst, Google will penalize you for duplicate content. At best, duplicate content will prevent you from reaching the number one spot in the search engine results pages.

Websites are structured so that a website with “www” in the url is a completely different than a website without the “www” in the url. The same is true for “http://” and “https://” versions of your website. In order to make sure that Google does not index both websites, your webmaster should set up a redirect so that any traffic coming in from www gets redirected to the non-www or vice-versa. To find out if your developer has done this, type both forms of your web address on two different tabs. When the pages load, you shouldn’t see any difference in the url between the two pages.

In addition, you can use the site: search to see if there is any other duplicate content.

7. Redirects – If your site is a redesign or a completely new design (and you had an old site in the past), set up redirects to make sure people can still find your site. Patients might have your old address because of an old link to your website or because they bookmarked your site.

To see if redirects are in place, type in the old address and hit enter. This should take you to a page on your new site. If the old site is still coming up or you get a 404 error, then you have a problem. Speak to your webmaster.

8. Speed test – Now that people are looking at websites on their mobile phones more than on desktops, it’s important that your website is able to load quickly. Check out Google’s page speed test to see how your website stacks up and reach out to your developer to help you address any technical issues that are highlighted there.

9. Sitemap – Your sitemap is an invitation to the search engines to index your website. To find out if you have a sitemap, type your domain and then “/sitemap.xml.” The majority of sitemaps are in this format, but if you don’t see your sitemap here, ask your webmaster where it is located.

This should be submitted using webmaster tools or by “pinging” the search engines. With a new site, there’s no easy way to see if this has been done unless you have access to your Google Search Console. However, if you’re showing up in search engines results, then you can be sure that your sitemap has been indexed.

10. Robots.txt – Every site should have a robots.txt file. Like the sitemap, the robots.txt file helps search engines understand how to index your site. The robots.txt file actually tells search engines what not to index. To see if you have this on your website, type in: "/robots.txt" after your website URL in any browser.

You should see “User-agent: *” somewhere on the page with some text below it. The * means that everything is allowed to be scanned except for anything underneath with a particular “Disallow:” tag. If you see “User-agent: /” that’s bad. Really bad. That means everything in your website is blocked. Contact your webmaster right away.

If you made it through the list – congratulations! You might still have some questions, so feel free to shoot us an email. We’d be happy to point you in the right direction.